VMware converter tool is a really nice tool to convert physical server to virtual machines. It’s free and fairly simple to use. The majority of time that I have used it I have not had any issues but a recent issue where a P2V’d vm failed to boot inspired me to write this blog posting.

Back in 2010 when I was first implementing virtualization at my company, I ran into an issue with a P2V of aW2K8 server that wouldn’t boot up after completion. The VM would go into a continuous loop of blue screens and reboots. This was very annoying and made it difficult to determine the source of the problem.

The blue screen error gave the generic error that hardware on the server changed. DUH…I just P2V’d you from a Dell M610 blade so of course your hardware has changed, you silly server. The fun part was finding out what the server didn’t like about this change to cause it to blue screen.

The blue screen error code indicated something with the disk had been changed. With that lead I started looking at all of the VM settings, in particular the disk settings. I noticed that the SCSI controller was set to Paravirtualized SCSI which was different from all of the other VMs I had P2V’d that day. Their controllers were set as LSI Logic controllers.

I figured something must have become confused during the conversion of this blade, so I changed the SCSI controller on the VM to LSI Logic SAS within the vSphere client. The settings change worked, and the VM powered up normally.

Fast forward to August 2013 when I encountered a similar problem. This time I was converting a Dell 1855 blade running Windows Server 2003 R2 which also suffered from the infamous blue screen reboot loop. Having seen this issue before, I checked the SCSI controller setting and found that it had been converted as an IDE controller. Server operating systems are not compatible with IDE controllers so this VM was not going to boot unless I changed the adapter controller. When I attempted to change the disk type using the vSphere client, however, the option was not available.

A quick search on the internet led me to VMware KB article 1016192 (Converting a virtual IDE disk to a virtual SCSI disk).According to the article, if no controller is selected during the conversion process, the VM is created with an IDE controller for the VM’s system drive.

To fix the issue you have two options:

1. Re-run the conversion, making sure you select a controller type. Do not leave it set to the default of auto-select.

2. Manually change the adapter type inside the vmdk file.

I was under a time constraint and did not have time to re-run the P2V, so I opted to manually change the controller type. Using the instructions from the article, I was able to successfully change the controller.

To manually change the disk controller type I used the following steps:

Login to the host where the VM resides. If SSH is not enabled, you will need to enable that option before you can connect.

- Once logged onto your server navigate to the datastore path of the VM.

# cd /vmfs/volumes/<datastore_name>/<vm_name>/

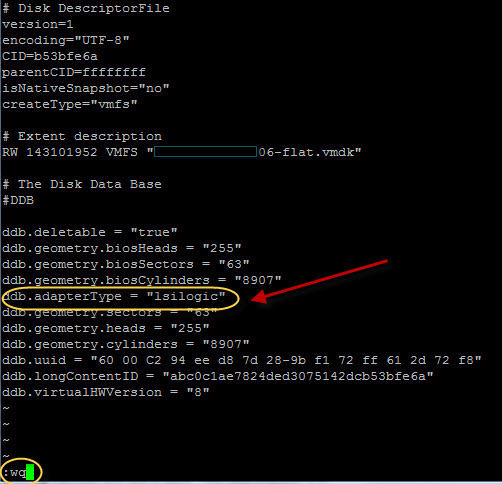

- Using the vi editor, open the vmdk file of the VM with the following command.

vi nameofserverfile.vmdk

(type r over a letter then the replacement character until all the characters have been replaced with)

ddb.adapterType = “lsilogic”

- Press the ESC key, then :wq to save the file.

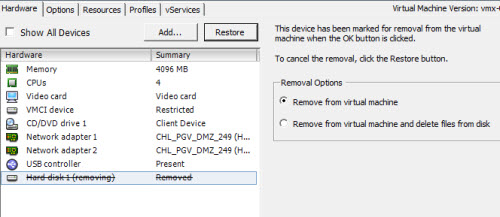

- From vSphere Client:

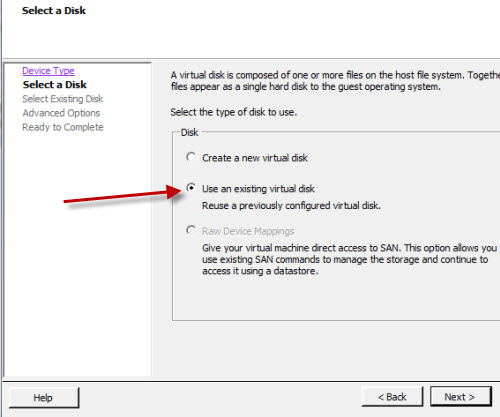

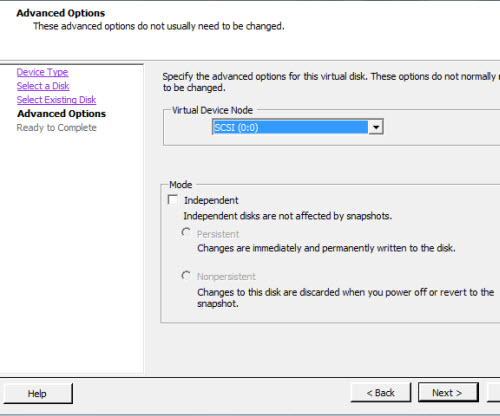

- After the disk has been removed, you will need to go back into settings and re-add the disk.